Don't underestimate your mind's eye

(Medical Xpress)—Take a look around, and what do you see? Much more than you think you do, thanks to your finely tuned mind's eye, which processes images without your even knowing.

A University of Arizona study has found that objects in our visual field of which we are not consciously aware still may influence our decisions. The findings refute traditional ideas about visual perception and cognition, and they could shed light on why we sometimes make decisions—stepping into a street, choosing not to merge into a traffic lane—without really knowing why.

Laura Cacciamani, who recently earned her doctorate in psychology with a minor in neuroscience, has found supporting evidence. Cacciamani's is the lead author on a co-authored study, published online in the journal Attention, Perception and Psychophysics, shows that the brain's subconscious processing has an impact on behavior and decision-making.

This seems to make evolutionary sense, Cacciamani said. Early humans would have required keen awareness of their surroundings on a subliminal level in order to survive.

"Your brain is always monitoring for meaning in the world, to be aware of your general surroundings and potential predators," Cacciamani said. "You can be focused on a task, but your brain is assessing the meaning of everything around you – even objects that you're not consciously perceiving."

The study builds on the findings of earlier research by Jay Sanguinetti, who also was a doctoral candidate in the UA Department of Psychology. Both studies go against conventional wisdom among vision scientists.

"According to the traditional view, the brain accesses the meaning – or the memory – of an object after you perceive it," Cacciamani said. "Against this view, we have now shown that the meaning of an object can be accessed before conscious perception.

"We're showing that there's more interplay between memory and perception than previously has been assumed," she said.

Cacciamani asked participants in her study to classify nouns that appeared on a computer screen as naming a natural object or artificial object by pressing one of two buttons labeled "natural" or "artificial." For example, the word "leaf" indicates an object found in nature, while "anchor" indicates a man-made or artificial object.

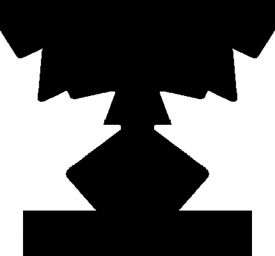

But before each word appeared on the screen, the computer flashed a black silhouette that – unknown to participants – had portions of natural or artificial objects suggested along the white outside regions (called the "ground" regions) of the image. Participants were not told to look for anything in the silhouettes, and they were flashed so quickly – 50 milliseconds – that it would have been difficult to notice the objects in the ground regions even if someone knew what to look for. Participants never were aware that the silhouette's grounds suggested recognizable objects.

Cacciamani measured how well study participants performed at categorizing the words as natural or artificial by recording speed and accuracy.

"We found that participants performed better on the natural/artificial word task when that word followed a silhouette whose ground contained an object of the same rather than a different category," Cacciamani said.

This indicates that the brain accessed the meaning of the objects in the silhouette's grounds even though study participants didn't know the objects were there, she said.

"Every day our visual systems are bombarded with more information than we can consciously be aware of," Cacciamani said. "We're showing that your brain might still be accessing information without your conscious awareness, and that could influence your behavior."